Archive for category Skyscanner

Lessons learnt at Skyscanner: Part One

Posted by Michael Okarimia in Skyscanner on December 6, 2021

Working for six years as a Data Engineer at Skyscanner has taught me some valuable lessons on how to build software software that can run at the scale of an “Internet Economy” user base

Version 1.0 of the Cloud native Data Platform: A tangle of hundreds of parallel data pipelines

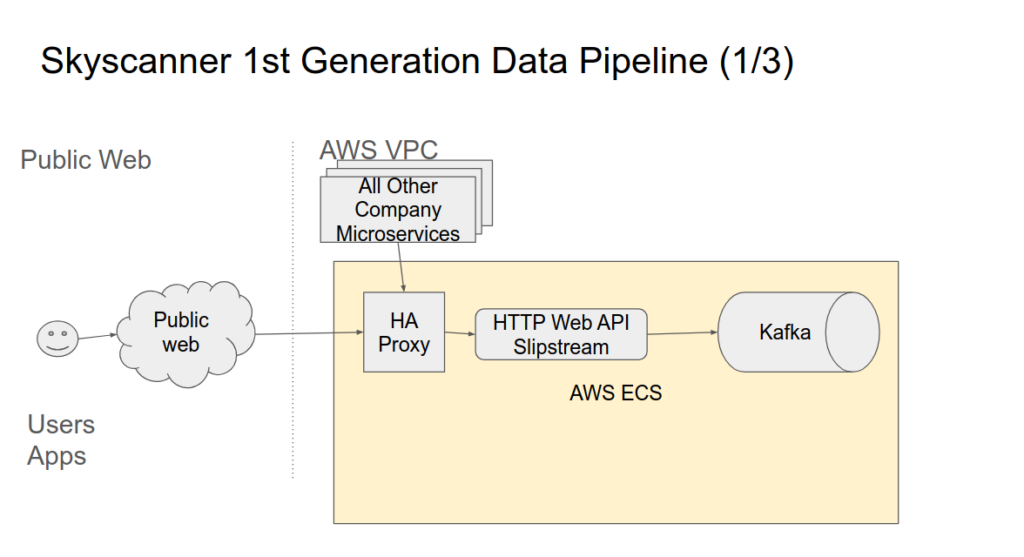

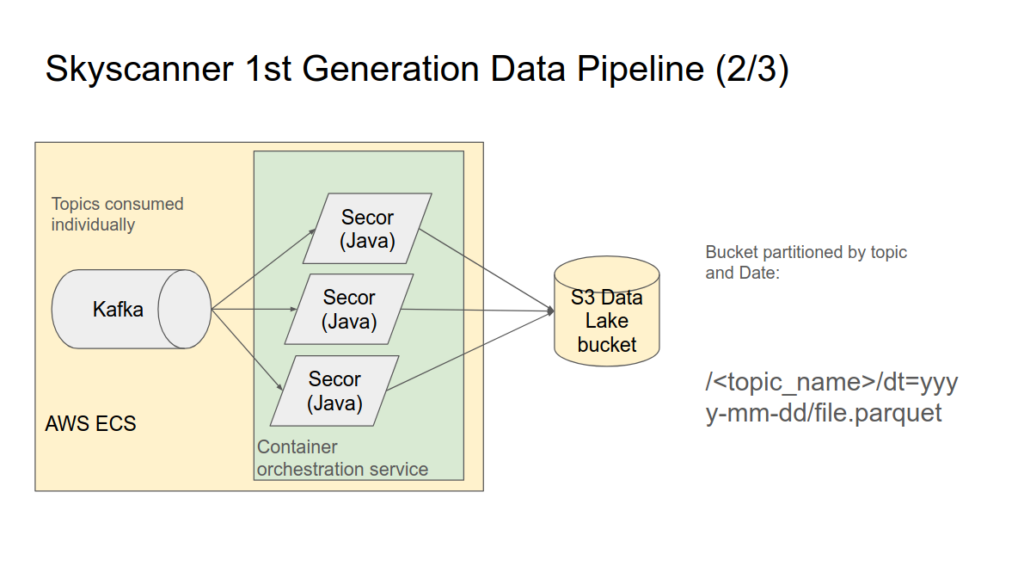

The first few years of my time at Skyscanner was focused on supporting part of the Data Platform which consumed data from the Unified Message stream (Kafka) and de-queued the messages into long term storage (AWS s3) and made this data available for querying in Hive Meta stores. This was done using containers running running a Java application which consumed the data and wrote it into AWS s3.

These containers ran software called Secor, and they were hosted instead AWS EC2 containers themselves running containerisation software called Rancher.

Data was partitioned in s3 by Kafka topic and then Date (dt=yyyy-mm-dd). Each new day would mean a new dt partition was created for existing topics by the Secor containers. The data was written in Parquet format. Volumes of data were in the order of around a Terra Byte a day.

When new Kafka topics were created, Secor containers were instantiated with the corresponding consumer groups to read these topics and write them to AWS s3.

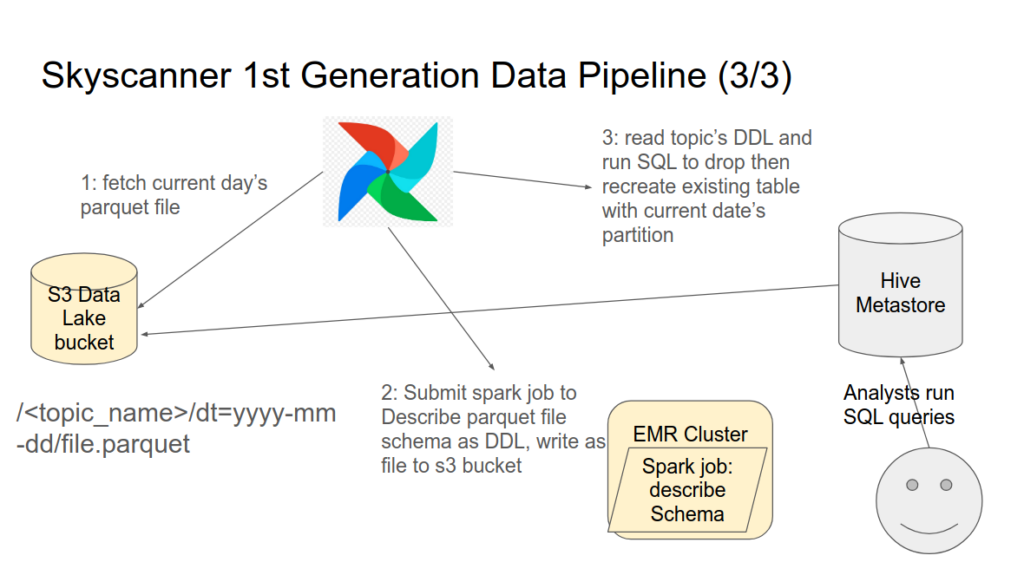

Once a day, the new s3 partitions by dt=yyyy-mm-dd would need to be added to Hive meta store in order for them to be queryable by downstream reporting tools such as Databricks.

I used Airflow to run DAGs which submitted a spark job for each topic once a day to a dedicated AWS EMR cluster. The spark jobs jobs would read the newest parquet files for that date, and generate the SQL DDL to create a new table in the Hive Meta store. A Python script in the DAG would read this SQL DDL and execute it against AWS Athena, which would drop and re-create the table and add the new s3 path to the table.

Operational Complexity

The were several hundred Kafka topics which were persisted into s3 and loaded into table in Hive meta-store.

There were at least two Containers consuming each Kafka topic, as each topic had at least two partitions. Each partition was consumed by one container. If there was higher number of messages produced to the topic, it would be scaled up to have more partitions, which would require an equal number of containers to consume that data. The largest topic had almost 100 partitions, and thus 100 containers to consume it.

The containerisation software ran on EC2 instances, which had to be manually scaled, which was error prone and time consuming. Many containers could run on a single EC2 host and often had to be manually scaled to different hosts.

Due to the hundreds of topics and tables involved in this system, it became complex and thus a rebuild of the Data Platform was commissioned. Me and my team were tasked to create a replacement.

This involves reaching out to customers of the platform to identify what the existing platform could not do and find places for improvement.

Problems encountered and addressing Customer’s Pain points

Customers biggest complaints regarding the data platform were, once you eventually onboarded a dataset, it was hard to find it, and difficult to check if it was complete.

- Onboarding a dataset was a multi step, complex series of manual tasks, which weren’t fully documented in one place.

- Schemas for new datasets had to be designed which required knowledge of the internals of the Data Platform.

- Testing new schemas required changes to production applications, and then more changes to promote that dataset to production.

- Even test Schemas required approval from group of data engineers who had limited time allocated for reviewing new schemas. As a result there was often a backlog of schemas for review, which created a bottleneck.

- Once the data was onboarded on to Production environment, discovery of the dataset was difficult

- There were no Data Quality metrics for a new dataset; no way to see if it was complete or on time.

Solving hard problems of scale and helping customers

In my next post I’ll describe how these problems were address by building a second generation unified Trusted Data Pipeline

What I’ve learnt at Skyscanner in my first three years

Posted by Michael Okarimia in Skyscanner on November 19, 2021

I joined Skyscanner in Jan 2018 as a Data Engineer in the Data tribe. It’s been a good experience and a massive learning curve. Despite the impact of the Coronavirus Pandemic occurring just as I felt I was getting into the swing of things, this has given me an opportunity to look back.

Here’s a little summary of what I learnt in my first three years:

- Operational work, toil, addressing errors making on call sane rotations are important, particularly during a time when there was a high staff turnover (due to the aviation sector shutting down during the peaks of the pandemic)

- Protobuf Schema design is hard. Protobuf is used to define schemas of all events sent to the Data platform and subsequently how they are stored as tables in Hive Metastore for later analytical querying. Whilst one can deprecate a field, one can not delete it, so schema design must ensure it is backward compatible and anticipates future needs.

- Trying to run a Kafka cluster on EC2 instances is a major undertaking. Avoid doing so where possible and use managed services such as AWS MSK or Kinesis instead).

- Streaming data is not always necessary. Use ETL batch processes if you can since they are repeatable.

- Metadata catalogue of datasets is key for Data Governance and also Data Quality. Being able identify the producers and data lineage of a table is very important.

- I learnt about how to build and run software which is Sarbine Oxley Compliant as well as how to implement GDPR Subject Access Request compliance and applying a relevant data Retention period.

- Cloud Cost monitoring in AWS is something that engineers need to be aware of to ensure cost are kept under control. This is where I learnt about AWS Cloudhealth.

- Good Documentation is key skill. Clear READMEs should document how to run the repo, test and build and deploy it. This will speed up onboarding new joiners

- Pairing remains an important skill to spread knowledge in a team and complete projects faster

Books worth reading:

Designing Data Intensive Applications by Martin Kleppman is excellent and well worth your time:

I would that Web Operations by John Allspaw gave a good overview of how to run highly scaleable web services. This gave me a good intellectual frame work for understanding how use Service Level Agreements, Service Level Indicators, Service Level Objectives to run a highly available service.

Examples of good practise when running a large Web Operation such as documentation, Retrospectives, Blame free Incident debriefs. Ensuring Runbooks exist and are up to date so the people responding to incidents have all the relevant information available to them.

Addressing Technical Debt is always going to a be a balance between new feature work and removing blockers to future changes. I found Michael Feather’s book, “Working Effectively With Legacy Code very insightful in how manage it.

There’s another project in the works so here’s a to a better year in 2022!

New Role: Data Engineer at Skyscanner

Posted by Michael Okarimia in Skyscanner on February 3, 2018

I was delighted to start a new role as a Data Engineer at Skyscanner in London this January. I’m looking forward to learning how to run data pipelines that are at the Internet Economy scale. This will be definitely Big Data! 😀